Researchers from Stanford University, the University of Washington, and the Allen AI Institute have developed a new method to increase artificial intelligence efficiency. The s1 model, which was created with less than $50 worth of computing resources, achieves performance that was previously only possible in projects with significant budgets.

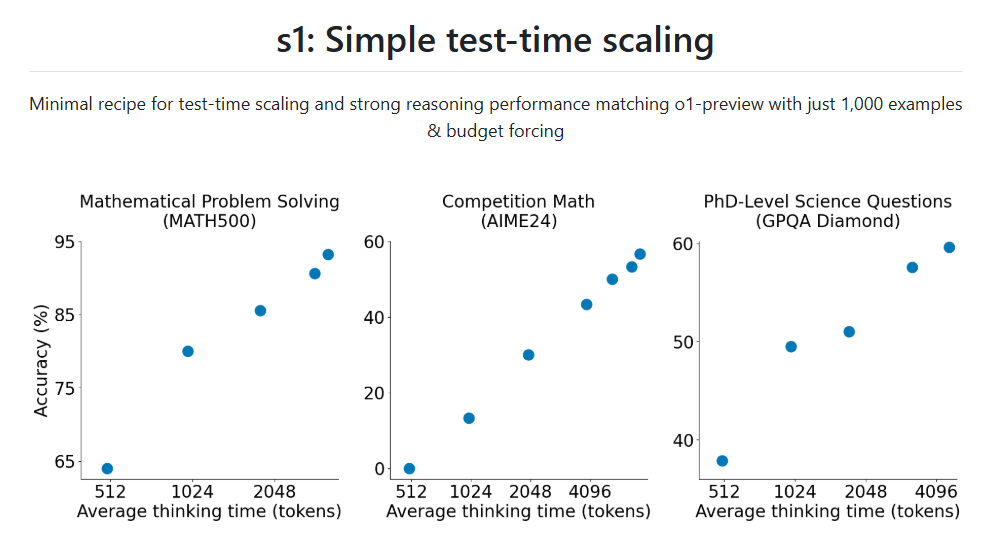

The researchers published their results in late January 2025, and the complete source code is publicly available on the GitHub platform. At the core of the solution is the test-time scaling procedure, which allows the model to dynamically regulate its thinking process before responding. If the system would close the response too soon, the so-called budget-forcing technique intervenes: the model receives supplementary signals that encourage it to continue processing and reconsider its preliminary conclusions. This increases the accuracy of results, especially for mathematical and logical tasks.

The s1 model learned through only 1000 carefully selected examples, unlike other language models' datasets of hundreds of thousands or millions. The researchers selected the training examples based on three criteria: quality, difficulty, and diversity. The model can solve complex problems such as AIME (American Invitational Mathematics Examination) questions or Ph.D.-level scientific tasks.

The performance of the s1 model further improves when it's given more time for processing, suggesting that it's truly capable of drawing more profound conclusions. The significance of the research lies in demonstrating that efficient language models can be built with minimal resources. The source code and dataset published under the Apache 2.0 license enable other researchers to develop the method further and find new application areas.

Sources:

- Muennighoff, Niklas, Zitong Yang, Weijia Shi, Xiang Lisa Li, Li Fei-Fei, Hannaneh Hajishirzi, Luke Zettlemoyer, Percy Liang, Emmanuel Candès, and Tatsunori Hashimoto. "s1: Simple test-time scaling." arXiv preprint https://arxiv.org/abs/2501.19393 (2025).

2.

3.

4.

What is S1 AI model, the OpenAI o1 rival trained in less than $50?

Researchers have developed S1-32B, an open-source advanced language model focused on reasoning tasks, trained for under $50.