Meta researchers have achieved a breakthrough in the brain-computer interface field: they have developed a new tool capable of converting brain signals into text. In the research published in February 2025, they examined the brain's language production using magnetoencephalography (MEG) and electroencephalography (EEG) with the participation of 35 skilled typists. The significance of the technology lies in the fact that it does not require invasive intervention, unlike the currently prevalent brain implants.

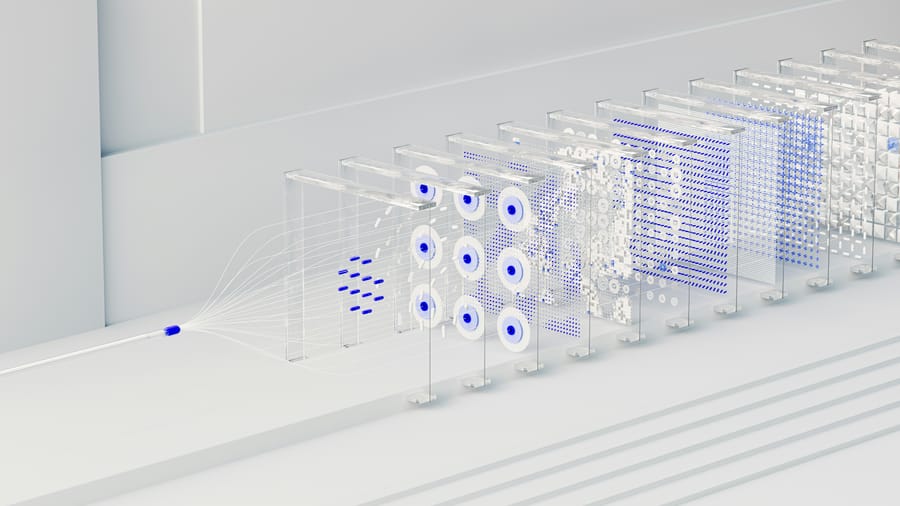

The research team's developed Brain2Qwerty deep learning system can learn which keys the user presses after observing thousands of characters. The MEG-based system operates with an average character error rate of 32%, significantly better than the 67% error rate achieved with EEG. According to Forest Neurotech founder Sumner Norma, as we have seen time and again, deep neural networks can reveal remarkable insights when paired with robust data. The system requires a high-performance MEG scanner similar in size to an MRI, which costs approximately $2 million. This half-tonne machine only functions appropriately in specially designed rooms shielded from magnetic interference to detect the extremely weak magnetic signals from the brain accurately. During scanning, the patient's head must remain completely motionless, as the slightest movement can distort the data. These technical requirements currently limit the widespread application of the technology. Still, researchers are continuously working to reduce the system's size and costs to make it more accessible in the future.

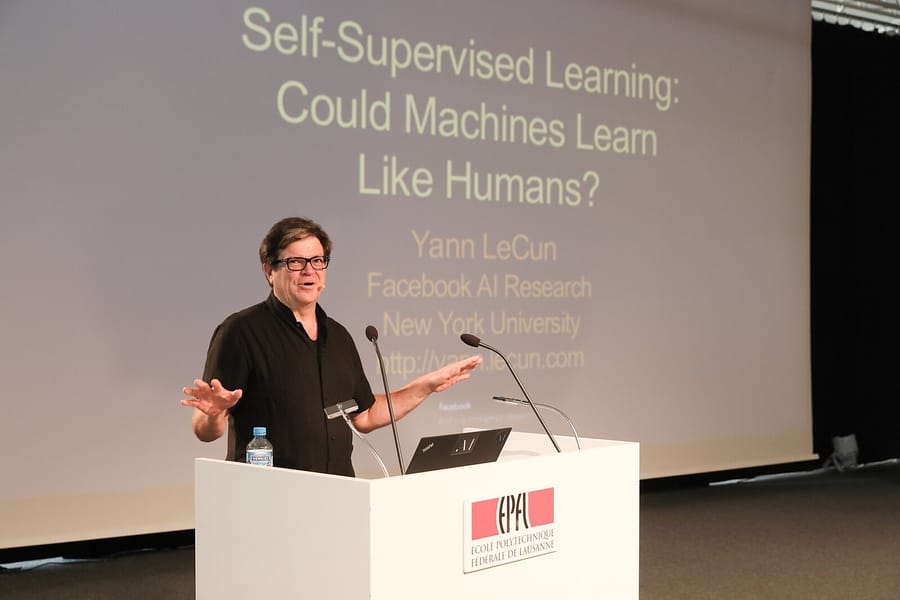

The research results confirmed the hierarchical predictions of linguistic theories: the brain activity preceding the production of each word is characterised by the successive appearance and disappearance of context, word, syllable, and letter-level representations. Jean-Rémi King, head of the Meta Brain & AI team, emphasised that these insights could be crucial in the development of artificial intelligence, particularly in the field of language models, as they provide deeper insight into the mechanisms of human language processing.

Sources:

1.

2.

3.