Alibaba has unveiled its latest artificial intelligence model, Qwen 2.5-Max, which the company claims outperforms the current market leaders, including DeepSeek-V3, OpenAI’s GPT-4, and Meta’s Llama-3.

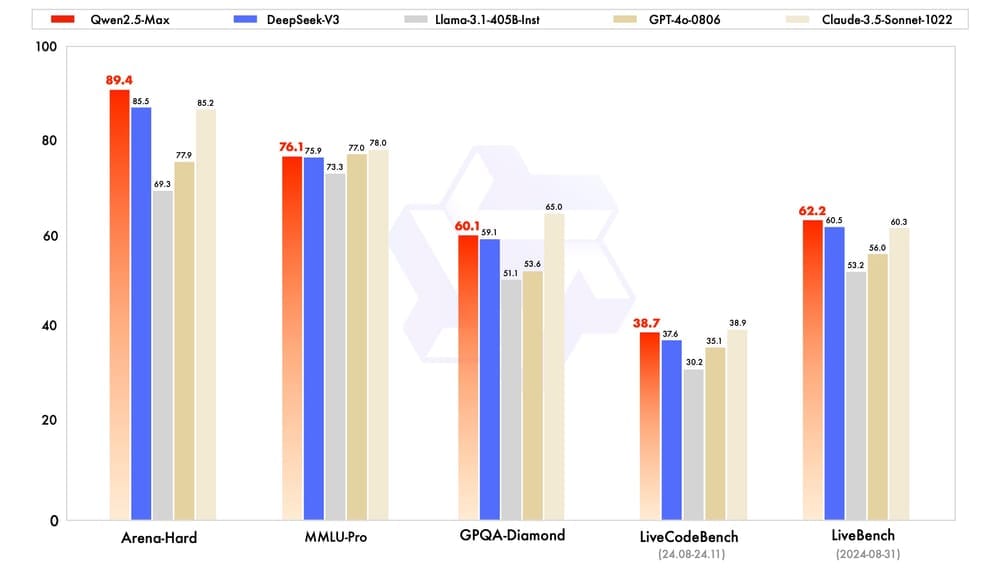

A Mixture-of-Experts (MoE) architektúrára épülő modellt több mint 20 billió tokenen tanították, majd felügyelt finomhangolással (SFT) és emberi visszajelzéseken alapuló megerősítéses tanulással (RLHF) fejlesztették tovább. A benchmarkokon kiemelkedő eredményeket ért el: az Arena-Hard teszten 89,4 pontot szerzett (szemben a DeepSeek-V3 85,5 pontjával), a LiveBench-en 62,2 pontot (DeepSeek-V3: 60,5), míg a LiveCodeBench-en 38,7 pontot (DeepSeek-V3: 37,6).

The Qwen 2.5-Max is now available via the Qwen Chat platform and for developers through Alibaba Cloud Model Studio, which is compatible with the OpenAI API. Alibaba plans further enhancements to the model’s reasoning and cognitive abilities by applying scaled reinforcement learning.

Sources:

2.

3.